| | In this edition, Anthropic’s Dario Amodei stumbles in his feud with the Trump administration, and ho͏ ͏ ͏ ͏ ͏ ͏ |

| |  | Technology |  |

| |

|

- Claude in Iran

- How voters feel about AI rules

- Autodesk’s AI ‘moat’

- Airwallex expands in US

- Prediction-market policies

Anthropic’s Dario Amodei stumbles in feud with the Trump administration, and an AI model writes an entire microbial genome. |

|

When Dario Amodei and a group of OpenAI employees left to create Anthropic, Amodei filled the “wise professor” role, openly discussing his thoughts on Slack (and trusting employees to keep what he said confidential.) That openness remained, even as Anthropic transitioned from a small research group to a massive company raising tens of billions of dollars from a wide range of profit-hungry investors. Today, Anthropic is so big, so powerful, that every word uttered by its CEO is a potential news story. That’s especially true now that the company is locked in a war of wills with the US military over the use of its AI models. For a moment, Anthropic looked like it had the upper hand. Its stand against the use of AI for surveillance had ginned up an outpouring of support from fans, who wrote messages of encouragement in chalk outside its corporate headquarters. The White House was working on a way to de-escalate the situation, according to people involved in the discussions, so that the military could keep using the technology it needs and Anthropic could keep growing, developing critical national security tools. But on Wednesday, that all changed. The Information obtained a leaked memo that Amodei wrote, railing against rival OpenAI and calling Trump a petty dictator. The administration was furious, according to people familiar, and the memo egged them on to follow through with threats to designate the company a supply chain risk, which could be devastating for the company’s bottom line. “If their model has this policy bias based on their constitution, their culture, their people, and so on, I don’t want Lockheed Martin using their model to design weapons for me,” Under Secretary of Defense for Research and Engineering Emil Michael said on the All In podcast Friday. “Boeing wants to use Anthropic to build commercial jets — have at it. Boeing wants to use it to build fighter jets — I can’t have that because I don’t trust what the outputs may be because they’re so wedded to their own policy preferences.” While Anthropic will have a good shot of taking on the Pentagon in court, there was little need for Amodei to pour fuel on the fire with a memo that would no doubt leak. Everyone at the company already knew how he felt from his public protest. On Thursday, in an attempt to placate the White House, Amodei apologized, saying it didn’t reflect his “careful or considered views.” The thing is, Amodei is a fast learner. He’s adapted quickly to the reality that he’s no longer running a research organization. The board would be shortsighted to replace Amodei with a professional manager — especially if he comes out of this mess a more mature and calculating CEO. The trick is whether Anthropic can hold onto its soul in the process. |

|

The risk of mythologizing AI in war |

An aerial view of Tehran. 2026 Planet Labs PBC/Handout via Reuters. An aerial view of Tehran. 2026 Planet Labs PBC/Handout via Reuters.Since the US began bombing Iran, we’ve been reading about how Claude was used to determine where to send missiles. The New York Times wrote in an opinion piece that Anthropic’s technology reportedly helped with targeting, and that one of the first targets was possibly an elementary school where 175 people died. It’s not that simple — and mythologizing about AI in the military can lead us down a worrisome path. These targeting systems are massive and complex operations. They often involve many different kinds of software beyond the generative AI that we see in consumer chatbots like Claude. And behind every bomb dropped and missile fired by the US, there’s a human being who is ultimately responsible for what happens. When asked about the concern that autonomous systems are executing kill orders on the All In podcast, Emil Michael said, “We’re not even close to there yet. We wouldn’t feel that a system that would have real risk for a civilian is ready to launch yet.” In another podcast, former Pentagon official Michael Horowitz called AI systems a “decision aid,” adding that the US military has been “conservative in some ways when it comes to the integration of AI in general, let alone a tool like Claude.” It’s not an excuse to say “Claude told me to drop a bomb on that location and I just trusted it” — and implying without evidence that it’s happening is irresponsible. Looking at AI through an emotional lens could lead to bad decisions about AI laws and regulations, and end up derailing important military and national security uses. — Reed Albergotti |

|

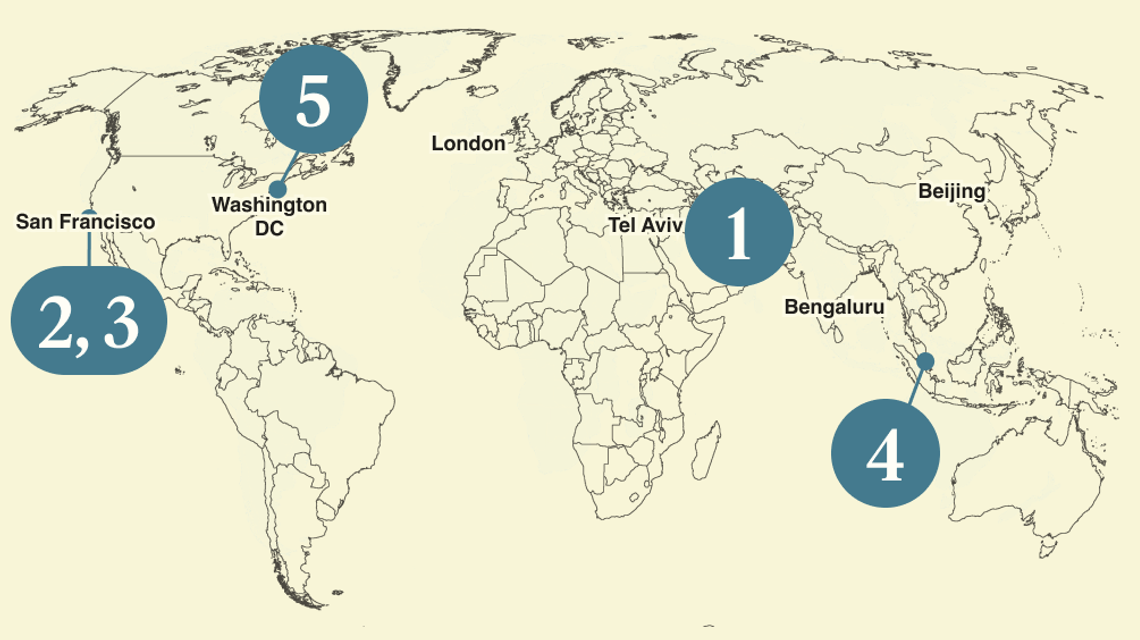

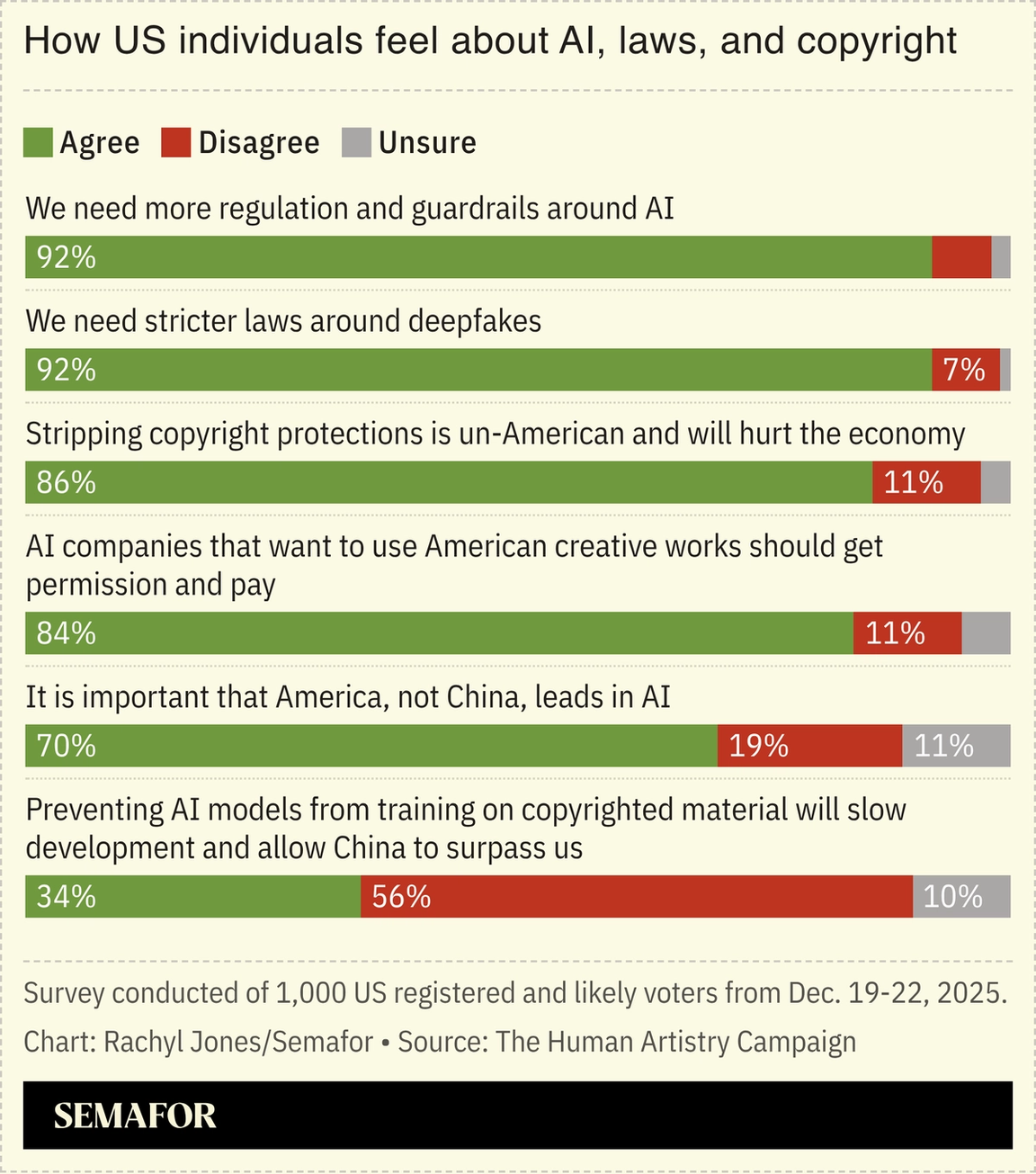

AI rules more popular than beating China: survey |

Support for AI guardrails and protecting copyright are some of the most bipartisan issues in the US right now, according to a new survey shared exclusively with Semafor. They are more popular than believing it is important that America leads China in technology. The Human Artistry Campaign, a coalition of creative organizations advocating for responsible AI use, commissioned the poll. It includes actors, musicians, writers, and others whose jobs — and art — is threatened by AI. The results reveal a disconnect in how people in power have approached the AI race and how voters feel about it. Competition with China is one of the primary drivers — if not the top driver — of national AI policy in the US, and companies have advocated for minimal restrictions to build out their technologies. “This is not an abstract concern,” the group wrote in its report. “It is a vote-moving issue, with intensity that cuts across ideology and demographics.” — Rachyl Jones |

|

Autodesk building AI ‘moat’ |

Courtesy of Autodesk/Joey Pfeifer/Semafor Courtesy of Autodesk/Joey Pfeifer/SemaforBruising drops in software company stocks this year have left investors struggling to discern which firms will be impacted by competition from AI agents, Semafor’s Andrew Edgecliffe-Johnson reports. “They’re trying to figure out who’s the Amazon.com and who’s the Pets.com,” says Autodesk CEO Andrew Anagnost, flashing back to two symbols of the dot-com boom and bust. But “the rubric’s a little bit more complicated” now, he adds. Anagnost says his San Francisco-based firm, which sells software to architects, engineers, and manufacturers, has three defensive qualities that will determine success in the agentic future. The software-as-a-service (SaaS) companies that manage to thrive, Anagnost argues, will be those in high-stakes industries where a supplier’s answers can’t just be “probably right”; those who serve clients operating in complex contexts; and those that possess both scarce data and the ability to apply expert understanding to that information. |

|

Payments startup Airwallex expands to US |

Anshuman Daga/Reuters Anshuman Daga/ReutersAirwallex, the global payments startup, has crossed the $1 billion mark in assets under management for its money market service, the company shared with Semafor exclusively, and is expanding the service to the US. Offering a high yield savings product might seem like table stakes in a crowded market but the company believes it has a unique trick up its sleeve: Eighty banking licenses across the globe that allow it to move customer money across borders essentially for free. Airwallex hopes the infrastructure, which took a decade to build, will put it in a good position to capitalize on the AI boom, which is driven by subscriptions and API fees. And as the infrastructure grows, micropayments that cross borders could become part of the fabric that enables “agentic commerce.” Of course, stablecoin companies, built on advanced blockchain technology, would have something to say about that. Airwallex’s cofounder and CEO Jack Zhang has been something of a curmudgeon when it comes to blockchain, but one can’t deny it’s a low fee alternative to traditional banking and works even in markets where traditional financial rails are nonexistent or nonfunctioning. Airwallex is a reminder that fintech disruption can come in many forms, and isn’t just an either-or choice between traditional banking and blockchain. — Reed Albergotti |

|

How companies deal with prediction markets |

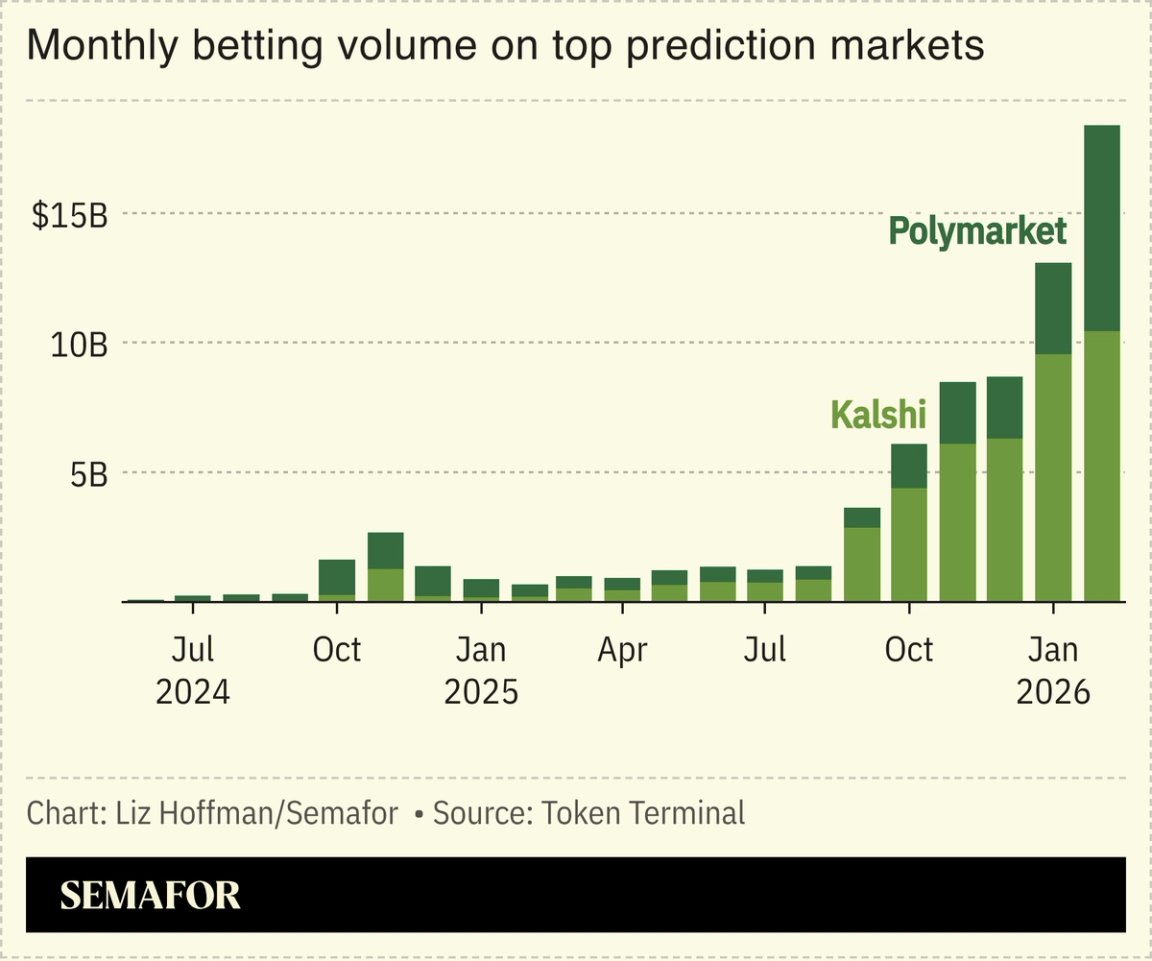

With prediction-market bets soaring on everything from what MrBeast will say during his next YouTube video to what Apple will unveil at its next event, the risk that corporate employees use insider information to bet on Kalshi and Polymarket is growing. But it seems companies — like government regulation — haven’t kept up. Semafor reached out to more than 100 companies, law firms, banks, PR shops, and hedge funds, and found that few had explicit rules around prediction markets. Others, like Google — whose 2025 Year in Search headlines appear to have been front-run by someone who knew the numbers ahead of time — had no comment. Some exceptions: OpenAI recently updated its company policy to clearly ban employees from using confidential info to bet on platforms like Polymarket and Kalshi — and then fired a worker who violated it. Ditto for MrBeast’s holding company, which instituted a similar policy and put an employee on leave after some Kalshi insider trading drama. United Airlines said its ethics rules don’t explicitly mention prediction platforms but do “prohibit using your position for your personal gain,” which would apply to Polymarket and Kalshi profits. If you’re reading this and would like to look front-footed on the largest corporate scandal about to hit your company, reach out. |

|

Since 2018, Hasan Piker has gone from streaming alone in his bedroom to becoming one of North America’s most influential leftist creators. This week on Mixed Signals, he sits down with Ben and Max to talk about getting older with his audience, the grind of broadcasting all day seven days a week, and whether constant livestreaming changes the way he thinks. Piker also talks about his relationship with Democratic politicians like Zohran Mamdani, anti-semitism, and dialing back his rhetoric as he accrues real political power. |

|

Bernadett Szabo/Reuters Bernadett Szabo/ReutersAn AI wrote an entire microbial genome. The Evo2 model was trained on 9 trillion DNA letters much as other AIs train on internet text, and when given a chunk of a microbe’s genome, was able to create a plausible-looking version of the rest. The researchers did not synthesize the resulting microbe, and it likely would not have lived if they had — DNA is less forgiving of errors than English text — but they did demonstrate significant control: In a separate experiment, Evo2 wrote DNA sequences that encoded Morse code messages in the shape of the chromosomes. Further advances are needed to create true AI-generated life, but “these AI models are the ‘ChatGPT moment’ for synthetic genomics,” one researcher told Nature. |

|

|