| | In this edition, AI might be turning all of us into ‘inoffensive, center-left’ newspaper columnists,͏ ͏ ͏ ͏ ͏ ͏ |

| |  | Technology |  |

| |

|

- Conflicting AI ideologies

- Automation vs. augmentation

- Agents are hiring humans

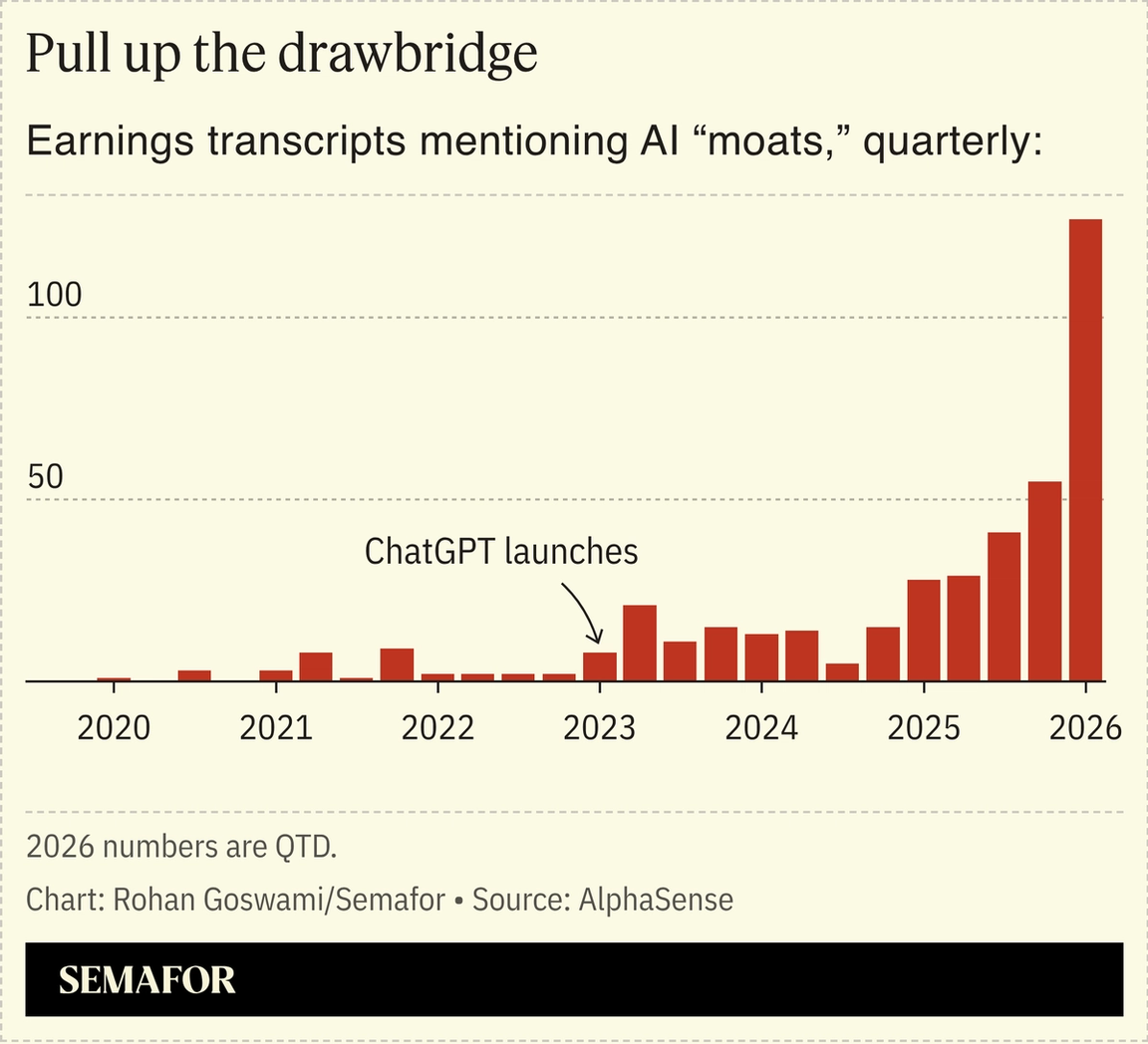

- CEOs’ new favorite word

- New chips on the table

Cerebral AI slop, and Anthropic’s efforts to get its models to obey rules appear to be working. |

|

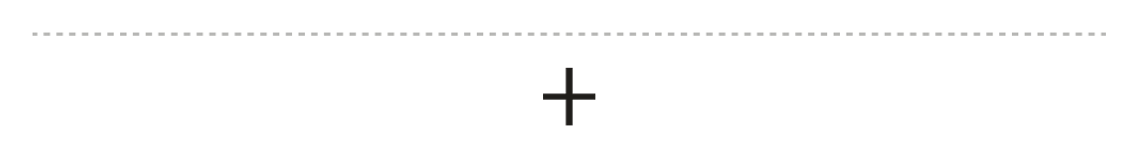

The other day I asked one of the most voracious readers I know for the best business books he’s read recently. Like a lot of my productivity-maxxing friends these days, he sent me a Claude readout, beginning with Thomas Friedman’s 2005 book The World is Flat and followed by a series of similar shopworn airport classics. Friedman’s paean to globalization was the embodiment of conventional wisdom — two decades ago. Now, it’s at the core of a fading center-left consensus that has lodged itself deep into our new shared digital brains, via a vast body of text, including Friedman columns (part of a sprawling New York Times lawsuit against OpenAI) and the derivative corpus, from Reddit to old newspaper columns. We’re in a moment of politicized concern about AI ideology. Elon Musk fears the technology will spread the “woke mind virus.” Tyler Cowen is pressing to be sure it will have a “Western soul.” My more modest worry is that these brilliant machines will be steering us in a kind of backward loop to the conventional wisdom of the recent past — a kind of cerebral slop. When I asked my boss about this concern, he replied that the problem is in the machine. The AI “trains models to please human raters, and human raters tend to prefer agreeable, inoffensive, center-left-coded responses,” he said in a Slack message. “The result is a kind of bureaucratic blandness with a particular ideological coloring, reinforced by the training data’s provenance (mostly text produced by educated, English-speaking, online populations).” This analysis, he acknowledged, was pasted directly from Claude. |

|

AI companies’ conflicting ideologies |

Priyanshu Singh/Reuters Priyanshu Singh/ReutersAnthropic is in talks with Blackstone and other private equity firms to form a joint AI consulting venture, The Information reported. It’s the latest sign of how much companies are leaning on AI firms to teach them how to integrate the technology into their own businesses. At an AI- and creativity-focused conference held by design software company Canva this week, Anthropic product designer Jenny Wen explained that “where users are at and what they understand is a moving target.” We’ve covered companies’ efforts to educate their workforces on AI, from hosting boot camps to offering prizes for those who best integrate the technology into their workflows. But how to do that is pitting some firms, like Anthropic — which has indicated it’s the company’s responsibility to find use cases for their products — against others that say those decisions should be left up to the end user. “It’s a behavior change. It doesn’t fail on the technology side. It fails on the user side,” Tim Moore, CEO of AI-content studio Vū Technologies, said at the conference. “The value you get out is often how much time and learning you put into it.” It’s probably true that employees who take the initiative will get the most out of AI. But it stands in contrast to views from some successful technologists, like Steve Jobs, who is known for saying, “It’s not the consumers’ job to know what they want.” It worked pretty well for Apple. |

|

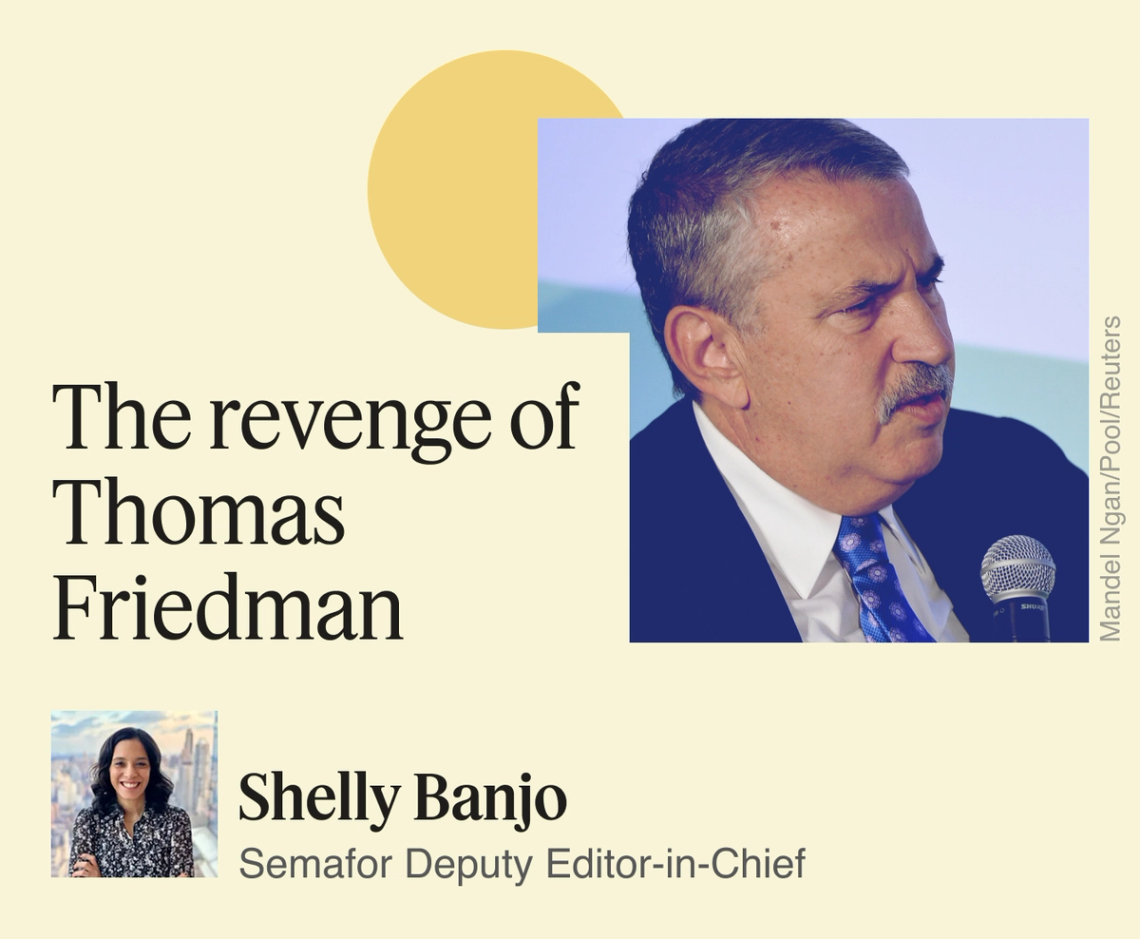

What is your job, really? |

To figure out if your job will be replaced by AI, figure out what your job actually is. That was the message of independent analyst Benedict Evans to a room full of graphic designers, marketers, and startup CEOs at the Canva conference. When spreadsheet software was invented, it didn’t eliminate accountants — they increased by 60% over the next decade, according to Census data. Their job, it turns out, was more than simply adding up numbers, but they offered “experience, authenticity, judgement, reference, curation, suggestion,” Evans said. Elevator operators, however, lost their jobs completely as automatic electric elevators caught on in the mid 20th century. There was a more efficient way to get from one floor to another.  Evans called this the “perfect case of automation,” noting that we don’t think of elevators as being automatic or not anymore. “And that’s probably how it will work” with AI, he said. “Once it works, it’s not AI anymore. It’s just software.” For now, what workers need to do is figure out what their customers are actually buying, he said: “Do they want something other than just automating the answer?” |

|

AI bots are now hiring humans |

Courtesy of Upwork/Joey Pfeifer/Semafor Courtesy of Upwork/Joey Pfeifer/SemaforHayden Brown has seen some unusual visitors recently to Upwork, the freelancer marketplace she runs. AI agents have been posting job ads to its platform, trying to hire humans to carry out tasks they cannot complete on their own, she told Semafor’s Andrew Edgecliffe-Johnson. They’re not having much success so far, Brown says, even as she predicts that demand from these agentic bosses will grow — a bet that other companies are also making, including RentAHuman, a startup we wrote about last month. The thing is, most businesses don’t want to vibe code critical operations, she argues. Instead, Upwork is seeing intense demand for “AI orchestrators” — individuals who combine tech fluency with human judgment and problem-solving skills. Brown, who has been Upwork’s CEO since 2020, has seen the world change after ChatGPT’s 2022 launch. Since then, the company has created an agent, called Uma, which assists users with tasks from writing job descriptions to conducting interviews. Brown also struck a partnership with OpenAI to offer AI training certifications. Freelancers are more likely to take up opportunities to update their skills than companies’ full-time employees, she believes. “Freelancers are the ones who move fastest to adopt new technologies and upskill, because it really does put food on their table.” |

|

Beneficial intelligence: You could never read all of Medium. But every week, the Medium Newsletter collects the most thought-provoking and timely writing from our community of experts and enthusiasts. You’ll get advice from academics, firsthand accounts of history unfolding, and wisdom to help you better understand a changing world. Subscribe now. |

|

Corporate America’s new AI mantra |

CEOs have a new favorite word: “moat.” At least 118 companies touted the moats they have, or are building, against AI this quarter, AlphaSense data shows. That’s a record, and the quarter isn’t over yet.  They’re trying to pull up the drawbridge against the oncoming agentic hordes, and looking to tamp down on investor panic about which SaaS businesses will survive the vibe coding era. “[Investors are] trying to figure out who’s the Amazon.com and who’s the Pets.com,” says Autodesk CEO Andrew Anagnost, drawing a comparison with the dot-com era. Etsy’s moat is its handmade goods in an AI slop world — “being able to buy something that is really meaningful,” CEO Kruti Goyal said earlier this month at an industry conference. For Palantir, it’s just sweat equity, I guess. “Twenty years of grinding has built a unique moat,” Palantir CTO Shyam Shankar said earlier this year. The moat discourse is a grim flip on the buzzwords of past earnings cycles — remember when every company had a blockchain strategy? — and shows how executives are nervously watching their stock prices as AI barrels toward them. There’s little evidence that investors are buying most of those narratives. (Palantir is, of course, doing fine.) And software companies that help organize companies’ data without bringing any of their own are in trouble, Uber CEO Dara Khosrowshahi told us last week on Semafor’s Compound Interest show: “If you’re a thin UI layer on top of, let’s say, systems of record, you’re going to have to earn your keep,” he said. — Rohan Goswami |

|

AI bubble driving interest in chip alternatives |

Courtesy of Great Sky Courtesy of Great SkyTech companies’ capex problem is driving research and investment in new kinds of chips that aim to lower the cost and energy needs of data processing. That’s what newly launched chip company Great Sky is trying to do with an alternative framework to the widely used GPU, it told Semafor exclusively. Its device — a superconducting optoelectronic network, or SOEN — uses light to communicate data, while GPUs use electrons. The startup claims it can process videos more than 1 million times faster than conventional models running on standard GPUs, with less energy. “You can spend [a fraction of the cost], not have to co-locate with a nuclear power plant, put it in existing data centers, and use less power than a house,” said CEO Jeff Shainline, formerly a researcher at the National Institute of Standards and Technology. The company raised $13 million — still a drop in the bucket in today’s fundraising ecosystem — led by San Francisco-based Bison Ventures, and plans to begin delivering chips later this year. Great Sky and fellow upstart d-Matrix, which raised $275 million last year, are trying to chip away at big guns like Nvidia and AMD — a moonshot, but a much-needed one. Capex and energy needs are still the largest hurdles to AI’s success. |

|

Anthropic’s efforts to write a 30,000-word “constitution” for its AI appear to be working. The document constricts Claude’s behavior, including by adding limits on dishonesty and causing harm. Researchers broke the document down into 205 rules, and found that newer, constitution-trained models were much less likely to break them than older ones. AI-risk experts argue that human values are complex, and that smart AIs would find dangerous loopholes, so successfully instilling the constitution would be “a big deal for safety,” the authors said. The results are not perfect. Claude still occasionally used fabricated data, and its rules sometimes conflicted: An AI instructed to lie must either break rules on dishonesty or following operator instructions. But the researchers were “fairly impressed.” |

|

|