|

Almost Timely News: 🗞️ 18 Ways To Save AI Token Budgets (2026-05-17)

Save time, save money, get better results

Almost Timely News: 🗞️ 18 Ways To Save AI Token Budgets (2026-05-17) :: View in Browser

The Big Plugs

New things!

Content Authenticity Statement

99% of this week’s newsletter content was originated by me, the human. The TLDR image was generated by ChatGPT. Learn why this kind of disclosure is a good idea and might be required for anyone doing business in any capacity with the EU in the near future.

Watch This Newsletter On YouTube 📺

Click here for the video 📺 version of this newsletter on YouTube »

Click here for an MP3 audio 🎧 only version »

What’s On My Mind: 18 Ways To Save AI Token Budgets

In this week’s newsletter, let’s talk about making AI as efficient as possible. By efficient, I specifically mean using as few computational resources as possible. I collaborated on a piece with Andy Crestodina over on his blog about document formats and making AI more efficient, but I wanted to dig much deeper into the more technical stuff here.

This past week the term “token budget” kept coming up over and over in conversations, particularly with enterprise leaders. When we talk about token budget, they’re talking about the amount of AI that their organizations are allowed to consume - based on what they’re paying at an enterprise level to companies like Anthropic, OpenAI, and Google. This newsletter accompanies Andy’s article on AI efficiency, which I was pleased to contribute to.

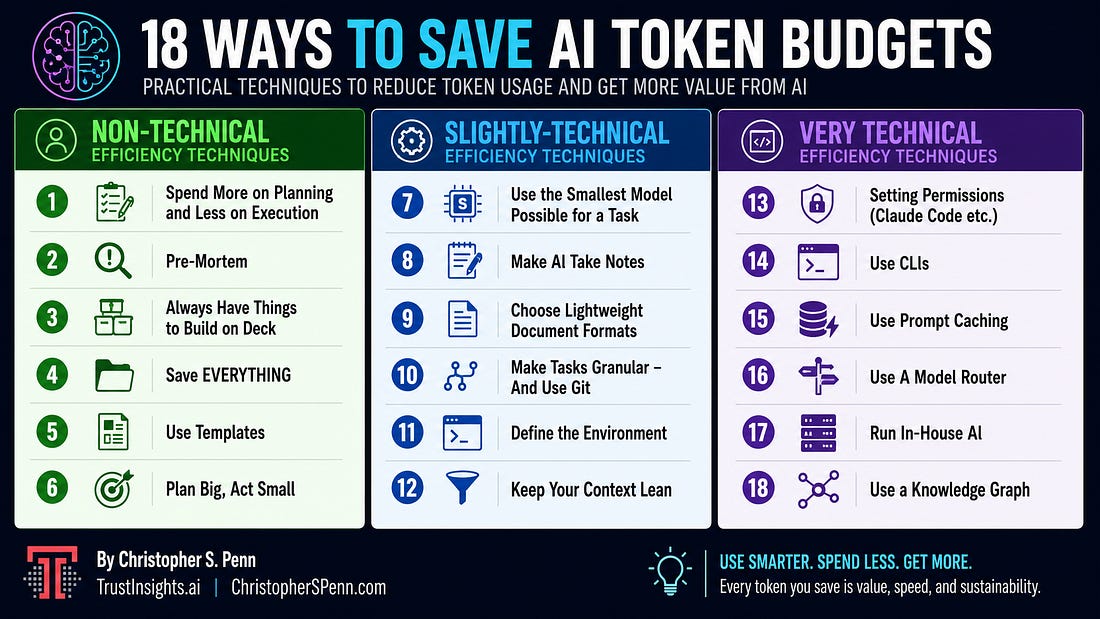

TLDR: Here’s an Infographic

In case you don’t have time to read all 10,000 words. Image made by ChatGPT from this newsletter’s content.

Part 1: Why Do We Care About AI Efficiency?

Companies like Anthropic, OpenAI, and Google allow customers to buy large blocks of tokens to be used with things like AI agents and agentic coding tools. In the past, when we look at the five levels of AI, level one was sort of the ChatGPTs of the world where you didn’t really have to worry about token budget and you could pretty easily break even with things like $20 a month subscriptions because the average person’s not going to blow through hundreds of millions of tokens in a single chat. The architectural limitations of the software prevented it from doing that in the first place because they would lose context so quickly that they would never come close to hitting those budgets.

But once things like level three systems, Claude Code, Claude Cowork, OpenCode, etc. started debuting and tools became more autonomous, it was much more possible for AI tools to just consume mass quantities of tokens, hundreds of millions of tokens in a session.

Now, as a brief primer and a reminder, a token is when you take something like a word or some pixels or whatever, and you digest it down into mathematics. You digest it down into a number, and then generative AI models are built by measuring the statistical relationship of different tokens to each other. That’s how large language models and vision language models and how everything in generative AI works. That’s why you can say “I pledge allegiance to the” and then that next word, the prediction is almost certainly going to be the word “flag” in North American English.

Tokens are the unit of work as well. When a model is doing predictions, it gets input tokens, which are the things that we prompt it with, such as documents, such as our chats, our voice memos, our images, our videos, and then the outputs are also tokens when the model creates things such as words, code, images, music, etc. And everything consumes tokens.

One of the quirks of generative AI is that all AI models, regardless of who makes them or how they’re made, all have absolutely no memory. They are completely stateless, which means that they remember nothing, even from interaction to interaction. When we are chatting with the model, the entire chat is passed back through the model to do its next predictions. What we say, what it says, all becomes part of the next prompt.

Imagine you’re talking to a friend, and you’re texting with this friend, and for some reason, they copy-paste the entire conversation back to you every time they respond. That would be a strange friend, but that’s exactly what’s happening when you’re having a chat with AI.

And so token usage explodes exponentially because tokens compound as a chat gets longer; it becomes exponentially larger. It’s a phenomenon called quadratic scaling.

The executives I talked to this week when talking about things like token budgets are talking about how to make AI more efficient. They’re given a certain amount of budget to work with, and one over-eager developer who deploys an AI agent could consume that budget at an alarming pace. One person said that at their organization, they had already hit their token budget on the 13th day of the month, and their token budget was not small. So efficiency is something that they care about, that enterprise leaders care about.

How can we make the most of this budget? You don’t want to underuse it because there isn’t a single provider that offers rollover on all tokens purchased - it’s use it or lose it. But you don’t want to be over budget either, and either get locked out or end up paying overage charges, what companies like Anthropic call extra usage. So those are key considerations when it comes to token budgets.

For the individual, many of us don’t pay for the super deluxe top-tier AI subscriptions, but even in those subscriptions, you are given a limit. Anthropic’s Claude Max subscriptions have exceptionally opaque token budgets that give you a percentage of how much you’ve used. You have no idea how much you actually have to work with. Other companies like Minimax tell you how many requests to the API you have each week, and the tokens can flex based on that.

People who pay for the low-end plans, the $20 a month plans, the $25 a month plans hit usage limits very, very quickly. And so it is absolutely imperative that they be as efficient with AI as possible to keep their token usage as low as possible so they can get more time with the model before it hits its ceiling of limits.

Finally, as Andy mentioned in his